Overview

If you are using Vertica as your datawarehouse, you know how important it is to have a data catalog that can help you manage, discover and understand your data. But how do you create a data catalog for Vertica that is easy to use, scalable and reliable? That’s where Blindata comes in. Blindata is a data catalog solution that integrates seamlessly with Vertica and provides you with data lineage, metadata management, data quality and governance features. In this blog post, we will show you how to build a data catalog for Vertica with Blindata and how it can benefit your data-driven organization. Read on to learn more.

Vertica is a column-oriented, relational, and ACID-compliant database that can handle any data analytics, at any scale, anywhere. Vertica supports various data types and analytical functions, such as event and time series, pattern matching, geospatial, and built-in machine learning.

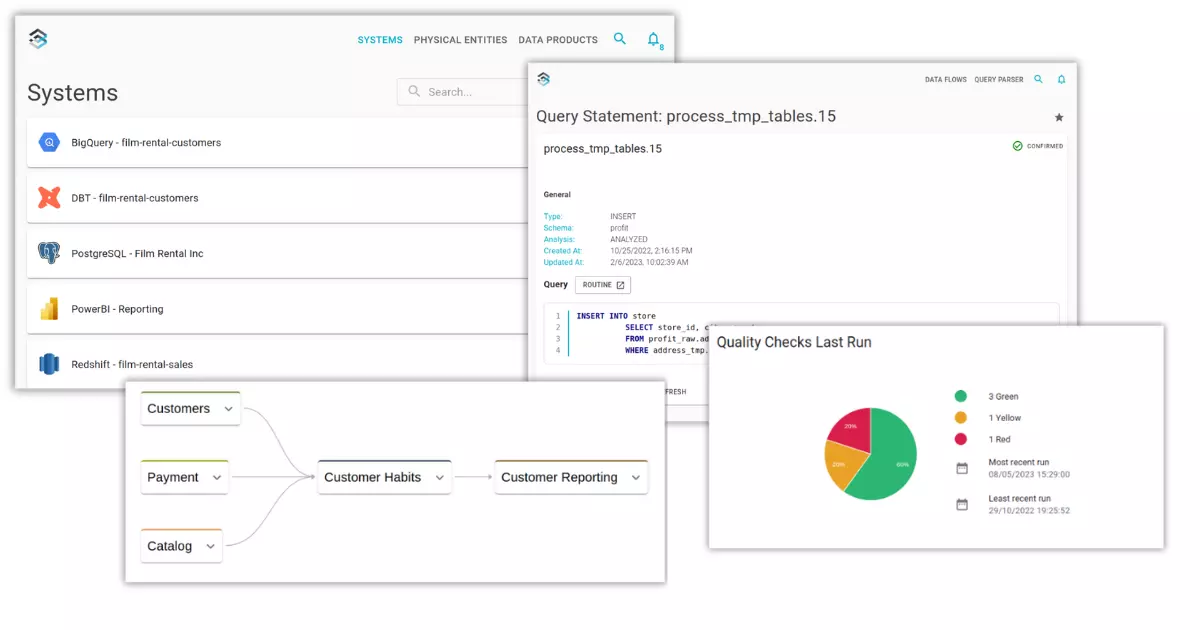

To manage, discover, and understand your data in Vertica, you need a data catalog solution like Blindata. Blindata integrates with Vertica and offers data lineage, metadata management, data quality and governance features. Blindata allows you to explore, comprehend, and trust your data across your data sources and platforms.

Data Catalog

To build a data catalog for Vertica, you need to access the information about tables, views, and projections definitions in your database. You can find this information in the system tables and views that Vertica provides for metadata management. For example:

- To get the table definitions, you can query the system view V_CATALOG.TABLES.

-- extract tables metadata

SELECT schema_name, table_name, table_type, remarks

FROM v_catalog.all_tables;

-- extract columns metadata

SELECT table_schema, table_name, column_name, data_type

FROM v_catalog.columns;

- To get the view definitions, you can query the system view V_CATALOG.VIEWS.

-- extract view definition

select table_schema,

table_name,

view_definition

from v_catalog.views;

- To get the projection definitions, you can query the system view V_CATALOG.PROJECTIONS. Including projections as well as foreign keys in the data catalog can provide useful hints to users and data analysts when they design their queries.

-- extract the list of projections

select projection_schema, projection_name, anchor_table_name,

from v_catalog.projections;

-- extract the list of projections columns

select projection_schema, projection_name, projection_column_name,

column_position, sort_position, column_expression

from v_catalog.projections_columns;

However, you don’t have to worry about querying the system tables and views manually to build your data catalog for Vertica. Blindata Agent’s connectors take care of this for you. Blindata Agent is a lightweight and secure software that connects to your data sources and platforms and extracts the metadata information automatically. Blindata Agent supports Vertica and other databases and platforms, and can be installed on-premises or on the cloud. Blindata Agent sends the metadata information to Blindata Platform, where you can access and manage your data catalog with ease.

Data Lineage

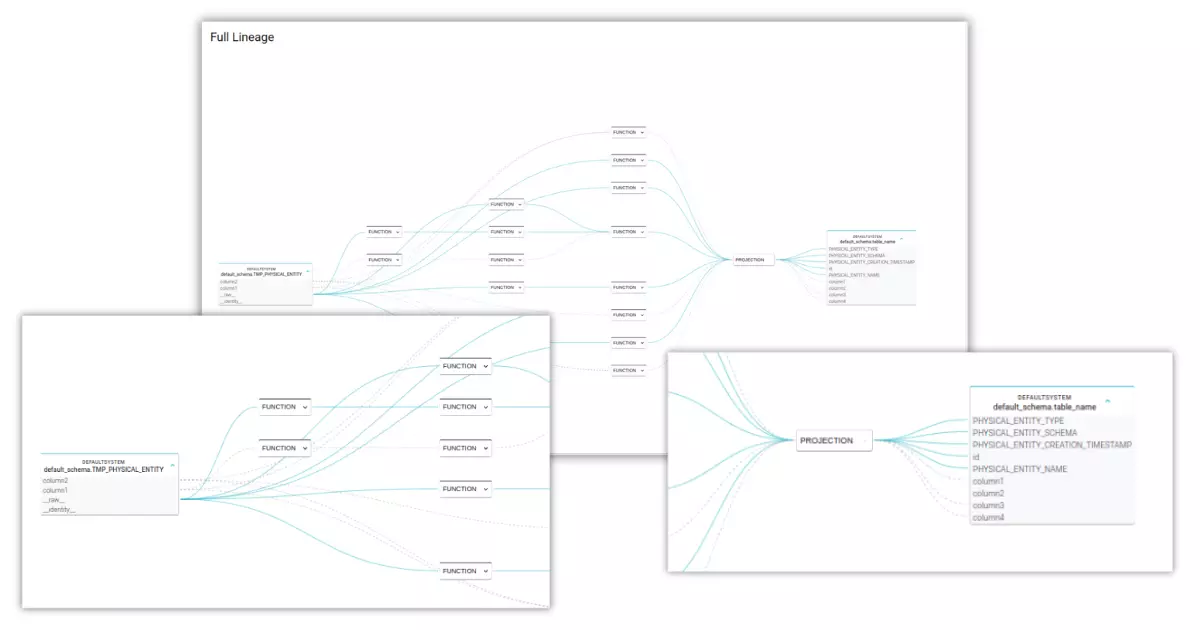

Another key feature of Blindata is its Automated SQL Lineage module that helps you easily track and manage data movements within your database. The SQL lineage module uses schema metadata and extracted SQL statements to infer data flows and transformations, including standard database objects such as views and routines, query logs, and scripts generated by ELT tools1. Blindata analyzes each single SQL statement to reconstruct the syntax tree of the SQL command and generate the data lineage by following the syntax tree connections from the source to the target tables.

Blindata’s automated SQL lineage module also supports Vertica flex tables, which are different kind of tables designed for loading and querying unstructured or semi-structured data. Blindata can extract data lineage directly from Vertica flex tables and visualize the data flows and transformations within and across Vertica flex tables and other tables.

To reverse the data lineage for Vertica, you also may need to access the information about the query log and views SQL definitions in your database. You can find this information in the following ways:

- To get the query log, you can query the system view V_MONITOR.QUERY_REQUESTS. This view provides summary information about user-issued query requests, such as the request type, request statement, request label, and success status.

SELECT user_name, request_type, request, success

FROM v_monitor.query_requests;

- To get the view SQL definitions, you can query the system table VIEWS or the system view V_CATALOG.VIEWS.

select table_schema,

table_name,

view_definition

from v_catalog.views;

Data Monitoring

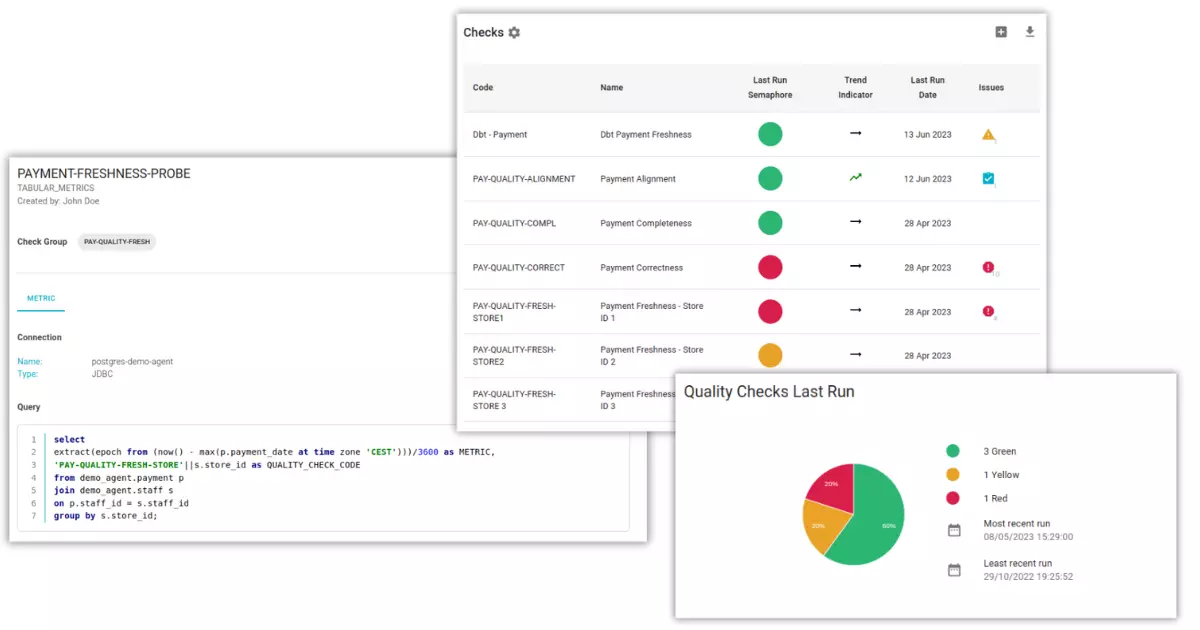

One of the key features of Blindata is its data quality module that helps you assess, validate, and monitor your data quality across your organization. Data quality is a crucial aspect of data governance that ensures that your data meets your needs and expectations. It means that your data is accurate, complete, consistent, and timely for your purposes.

Blindata’s data quality module allows you to define key quality indicators (KQIs) based on the data quality dimensions that are relevant to your context and goals. For example, you can measure the accuracy, completeness, consistency, uniqueness, timeliness, and validity of your data. You can also customize your dimensions and criteria according to your specific requirements.

Blindata’s data quality module also enables you to implement data quality probes that collect and calculate the metrics for your KQIs. You can use SQL queries to access and analyze your data sources and platforms, including Vertica.

Blindata’s data quality module also provides you with data observability features that help you track and report your data quality over time. You can set up alerts and notifications for potential errors or warnings, visualize your data quality metrics in dashboards and charts, and share your data quality indicators across your organization. Blindata integrates with the business glossary and data catalog modules to provide a comprehensive view of your data assets and their quality.